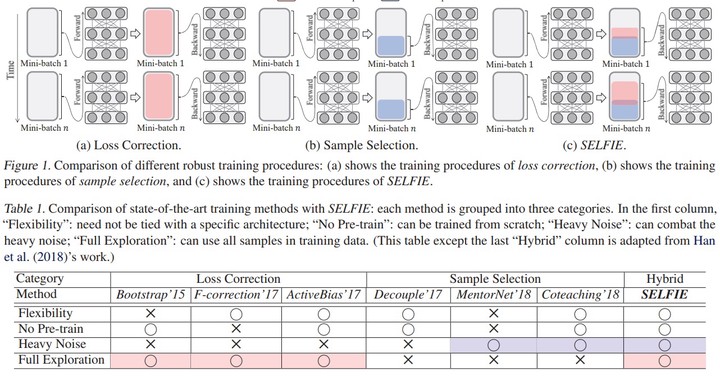

Abstract

Owing to the extremely high expressive power of deep neural networks, their side effect is to totally memorize training data even when the labels are extremely noisy. To overcome overfitting on the noisy labels, we propose a novel robust training method called SELFIE. Our key idea is to selectively refurbish and exploit unclean samples that can be corrected with high precision, thereby gradually increasing the number of available training samples. Taking advantage of this design, SELFIE effectively prevents the risk of noise accumulation from the false correction and fully exploits the training data. To validate the superiority of SELFIE, we conducted extensive experimentation using four real-world or synthetic data sets. The result showed that SELFIE remarkably improved absolute test error compared with two state-of-the-art methods.